The honest answer is: sometimes. Uploading a selfie to an AI tool is only as safe as the product's data policy, retention policy, and business model.

Short answer: use AI photo editors that explain what they store, how long they keep it, whether they train on user uploads, and how you can delete the data afterward.

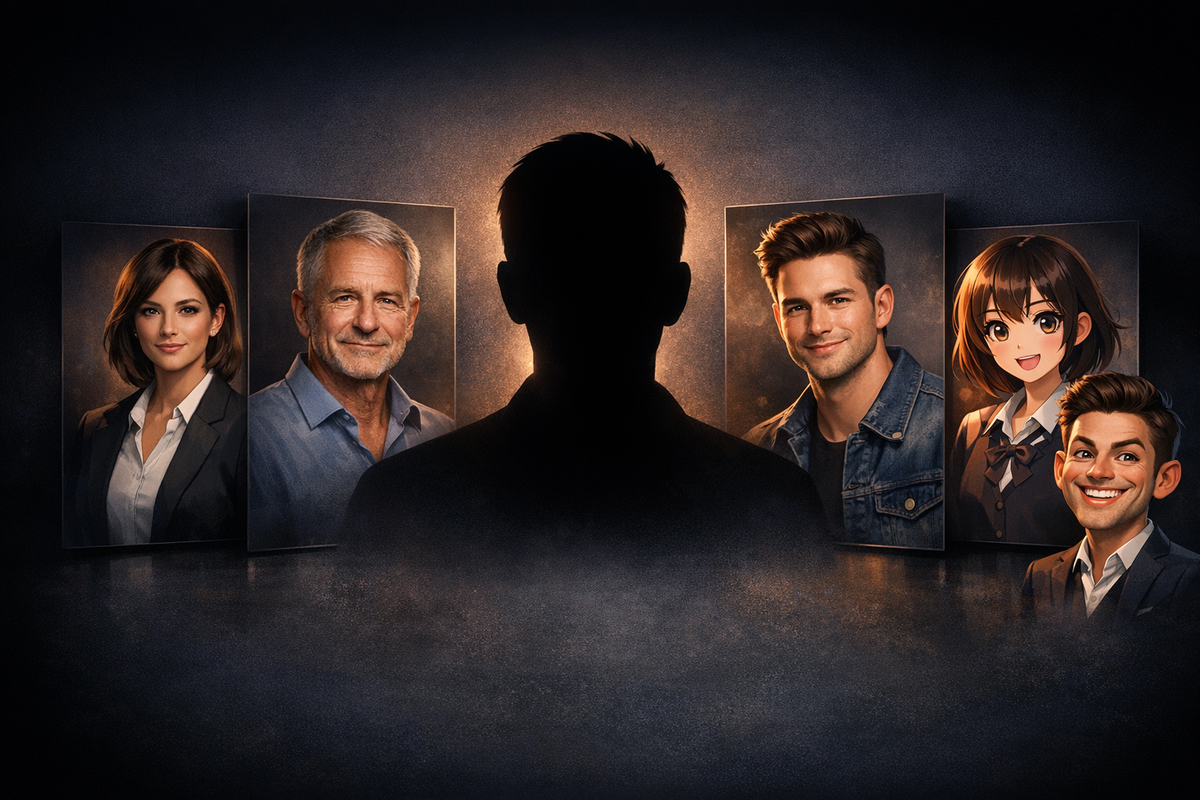

What actually happens when you upload a selfie

Most AI photo editors do some version of this flow:

- Your image is uploaded to a server or processed locally.

- The model reads facial structure, lighting, and other visual information.

- A transformed image is generated.

- The service decides whether to delete or retain the source image and output.

The safety question is not whether AI "looks" at your face. Of course it does. The safety question is what happens after processing.

The four policy checks that matter

Before uploading anything, look for clear answers to these four questions:

- Retention: How long are source selfies stored?

- Training: Are user images used to train current or future models?

- Deletion: Can the user delete uploads or generated results?

- Sharing: Is the data sold, licensed, or shared with third parties beyond core infrastructure vendors?

If the product is vague on those points, assume the risk is higher than the marketing page suggests.

Red flags worth taking seriously

These are the signals that should make you stop:

- the privacy policy is hard to find

- the policy gives the company broad perpetual rights to uploaded images

- there is no retention window

- the app is free but the business model is unclear

- the company cannot explain whether uploads are used for model training

People often focus on "deepfake risk" in the abstract, but the more immediate problem is simpler: some apps keep more data, for longer, than users realize.

When the risk is lower

The safer tools usually share a similar profile:

- clear deletion language

- limited retention windows

- a business model based on paid generations or subscriptions

- narrower product positioning instead of viral novelty bait

That does not make any tool risk-free. It just means the company is behaving more like a product vendor and less like a data-harvesting growth experiment.

Practical upload rules

If you still want to use AI portrait tools, use basic discipline:

- Upload only the photos needed for the job.

- Avoid sending sensitive background details, IDs, or personal documents in the frame.

- Prefer products with a visible privacy FAQ and support path.

- Re-check deletion options after the generation is done.

Which route should you take next

If you are still choosing a product, use Best AI Photo Editor for Profile Pictures.

If you want the privacy-specific reading path, use the AI Photo Editor Privacy FAQ.

If your main job is a credible work-facing portrait rather than a novelty filter, stay on the AI Professional Photo Editor route.